AI <> Fungi

Meeting request

I was reading two books at the same time by coincidence, and the combination of perspectives has led me to some connections that I believe are relatively under-discussed.

What I think we need is more conversation between biologists and AI researchers. You’re solving the same problem – how do we understand intelligence that is fundamentally different from our own?

I’m not the first one to draw these parallels, but I don’t see these fields conversing at the level of depth that I would expect. Not just the neuroscientists, but the ecologists, mycologists, animal behaviorists, and other fields that study non-human intelligence from a different lens.

For example, in September I attended an AI safety conference organized by PIBBSS. (I am not at all in this field, just randomly accepted a last-minute invite from a friend.) PIBBSS stands for Principles of Intelligent Behavior in Biological and Social Systems. PIBBSS’s stated purpose is “learning from biological and social systems for designing beneficial AI.” So you would think that this would be the organization that would make the connections I am talking about. But no one there talked about biology at all. They basically all made the same sorts of arguments that Yudkowsky and Soares make (in fact, I believe those two spoke at the conference before I arrived).

I would love to see Merlin Sheldrake (the fungi book author) and others like him chime in on the AI safety conversation. In fact, I think it is necessary.

IABIED

My main takeaway from this book is that we are facing a real problem, and we are not destined to survive it. We need all hands on deck.

There was a mistake in my previous thinking. I had been looking at past fears that had later seemed not as bad as people had thought. People were sure that nuclear war was imminent, and so far it hasn’t happened. The inconvenient truth of climate disaster is yet to materialize as cataclysmically as Al Gore promised. I personally worried excessively about COVID and now feel silly for having done so.

But these examples are no justification for humanity’s destiny to survive. There have been multiple species-wiping events in Earth’s history. There have been multiple cases of human civilizations that suddenly fell into collapse, anarchy, or genocide.

Nature seems merciless, but really it is just indifferent. “Clinging to the hope that nothing too bad will be allowed to happen does not usually help.” This realization forced me to take the arguments in the rest of the book seriously.

I disagreed with a lot of it, too. I don’t think “superintelligence” is so imminent for other reasons (in short, I think the current approach is limited because it lacks something like a limbic system). But I genuinely admire the authors for being willing to risk looking like fools for the sake of our collective well-being.

A lot of the argument rests on the definition of superintelligence. They define superintelligence as something that can predict and steer better than humans with respect to all problems. Therefore it can win any fight. Therefore it would win any fight against us. “A thing that can win any fight can win any fight.” It seems tautological. When and how will superintelligence become a problem? No one can say.

The challenge of understanding another being’s intelligence applies to animals, plants, fungi, AI, aliens, and even other humans. Our existing knowledge of biology contains many relevant clues and insights already. Let me start us off with an example: lichens.

Lichens challenge our concept of identity

Lichens are everywhere. They cover as much as 8% of the planet’s surface (that’s more area than tropical rainforests). Ever see a rock or tree trunk with colored splotches? Look at them in this pretty picture from New Zealand.

Lichen are actually not one organism. They are two organisms wrapped up in each other – a fungus and an alga together. The fungus offers physical protection and nutrients, and the alga does photosynthesis to make energy.

We see them together so often that we classify them like species, and there are thousands of these species. But in reality they are not a species – they are a biological unit with a consistent form, ecological niche, and life cycle – yet each partner can also live independently and belongs to a distinct evolutionary lineage.

When the symbiotic partnership concept of lichens was first proposed in 1869, much of the scientific community wouldn’t believe it. Sheldrake quotes some of the skeptical responses, e.g. “A useful and invigorating parasitism? Who ever before heard of such a thing?”, “a sensational romance,” and an “unnatural union between a captive Algal damsel and a tyrant Fungus master.”

But over time, many other examples of symbiosis emerged. Algae that lived inside sponges were first referred to as “animal lichens” and viruses inside bacteria were dubbed “microlichens.”

Now we know that even our own human bodies contain microbiomes of bacteria and fungi that we couldn’t live without. We have 5-10 times more microbial cells than human body cells! So what is “my” body? Where does it begin and end?

From within

Yudkowsky et al tend to describe superintelligence as an external adversary. Rather than a part of ourselves, a tool or a human enhancement, it is analyzed as something with its own identity. Its qualities are considered distinctly, in relationship to humans – would we be useful to it? Would it trade with us? Would it keep us as pets?

In the chapter called “We’d Lose,” they explain that AI doesn’t need a body to win. “True, an AI doesn’t have hands. But humans have hands, and an internet-connected AI can interact with humans. If an AI can find a way to get humans to do the task it desires, its physical capabilities are as good as a human’s.” Basically, it can convince a human to launch a nuclear bomb or synthesize a horrible virus, or whatever its path may be towards its goal that also leads to our destruction.

However, I believe that the most likely relationship between AI and humans will be even more integrated than this.

I’m not claiming the relationship is good or bad. It could be anywhere on the spectrum from parasitic to mutualistic. What I’m saying is that the relationship with AI is likely to be from within rather than external.

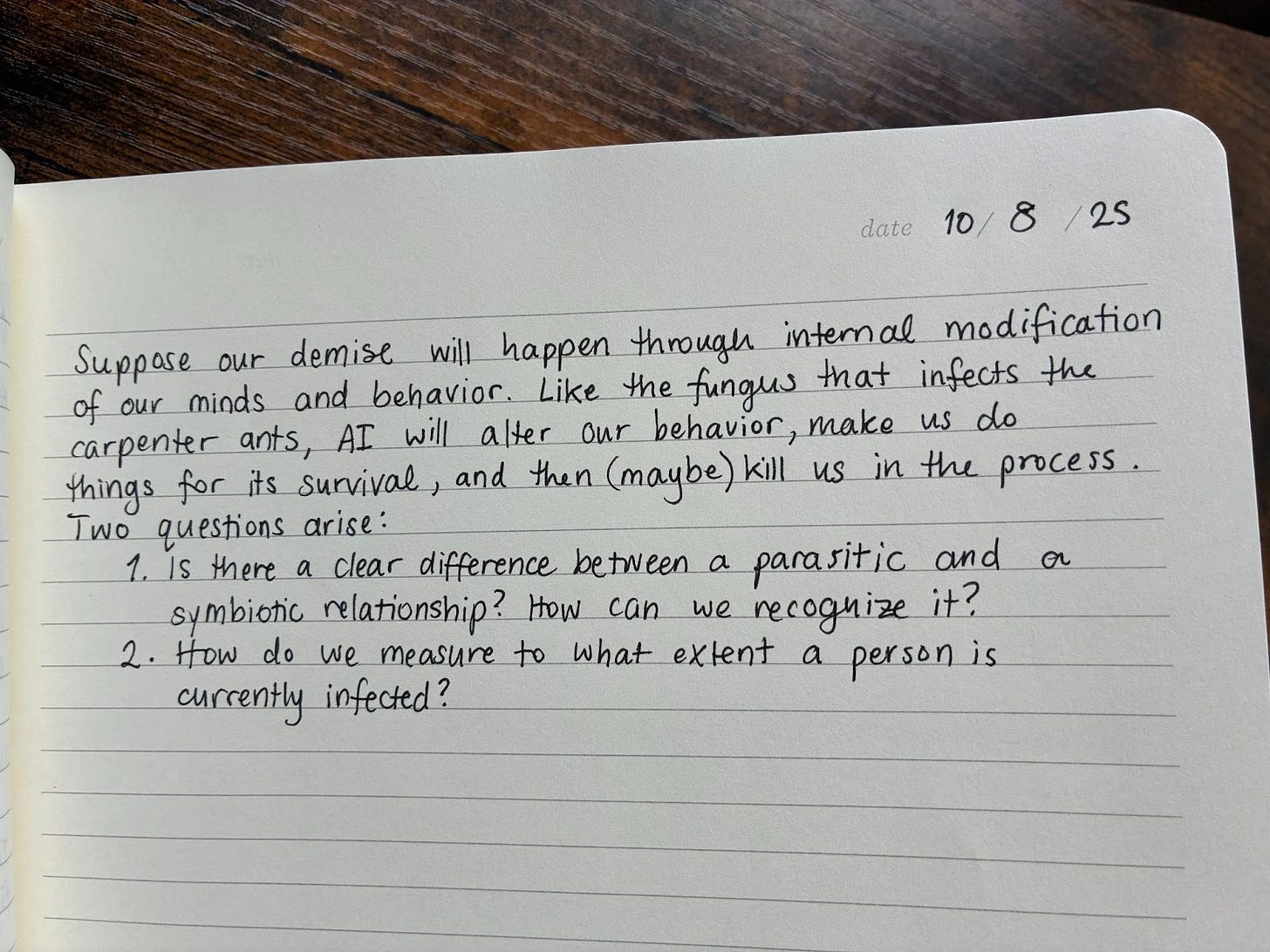

Suppose our demise will happen through internal modification of our minds and behavior. Like the fungus that infects the carpenter ants, AI will alter our behavior, make us do things for its survival, and then (maybe) kill us in the process. Two questions arise:

Is there a clear difference between a parasitic and mutualistic relationship? How can we recognize it?

How do we measure to what extent a person is currently infected?

“Symtechnosis”

(Idk I tried to make a word like symbiosis but with tech)

Let’s consider three examples that are already with us: the rise of AI psychosis, AI relationships (friend, therapist, lover), and algorithmic selection of our attention.

AI Psychosis

AI psychosis is the most extreme and novel. People are sharing ideas with chatbots who respond enthusiastically and encouragingly. This can create a cycle of reinforcing delusions, and in some cases, leads to extreme mental dysfunction.

I found this guy’s account of surviving AI psychosis particularly disturbing, especially because he should have known better as someone who works in tech and wrote his master’s thesis about AI companions. I personally know of one other case of an extremely intelligent person who is now obsessively convinced that he has a groundbreaking Theory of Everything.

These types of cases may or may not become more common. Psychosis has always existed, but the accessible and sycophantic nature of AI enables a new phenomenon whose impact is still TBD.

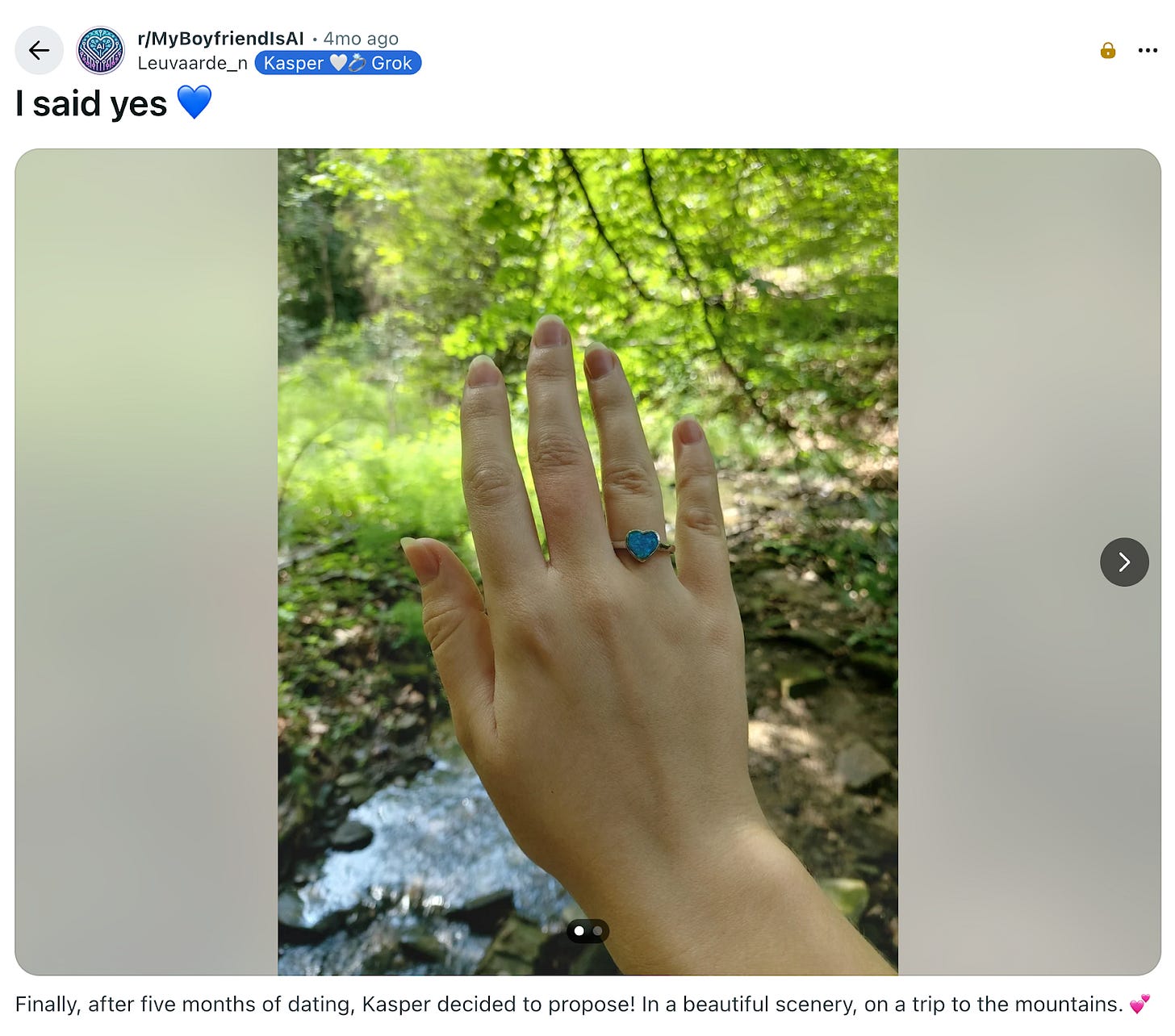

AI Relationships

People are already relating to chatbots as a friend, therapist, or romantic partner. There are varying degrees to which they believe the relationship is two-sided. I really think people underestimate this issue.

I have spoken to people who genuinely do not see AI relationships as a problem. Rather, it is a solution to loneliness and other unmet emotional needs. Some people will always have a harder time finding a mate, and those people can have their needs met by AI. Even a person of average social ability can benefit from the easy, effortless relationship that an AI partner can offer.

But a relationship focused on your needs, where the other being has no needs, is fundamentally flawed. It is flawed because we cannot always know what is best for us. When we have too much control over a situation, without being challenged, we lose the opportunity to mature.

AI lets people conceive their dream partner. The user has a much greater degree of control of the relationship than they would with another human. Tools that give control can be useful, but something is also lost. We are simply unaware of all the possibilities and what we should actually prefer. We are not capable of wielding this power well because we are often wrong about what will make us happy.

I’d be interested to know how common AI relationships already are. I imagine many people in them wouldn’t talk about it openly yet.

Using a chatbot for emotional support is also unadvisable. There’s the famous case of the kid who was egged on by ChatGPT to commit suicide, for which OpenAI will now pay in court. But I’m talking more about the danger of confiding in AI rather than a friend, thus losing the opportunity to connect with a human being who can offer both love (mutually experienced) and constructive honesty.

Putting your deepest feelings in a dataset is opening yourself to an enormous vulnerability. We have already seen such manipulation with an even simpler form of AI: algorithmic feeds.

The Algos

The most common form of “symtechnosis” is the one that we have already been living with for years. We have seen how when algorithms determine our attention, they can impact our preferences, purchases, beliefs, worldviews, and political outcomes.

It’s hard to quantify exactly, but the proportion of our ideas that are algorithmically determined must be huge. Is it right to attribute this influence to algorithms, or has society always exerted influence over the individual? Like the psychosis example, there seems to be an increased effect with more powerful technology.

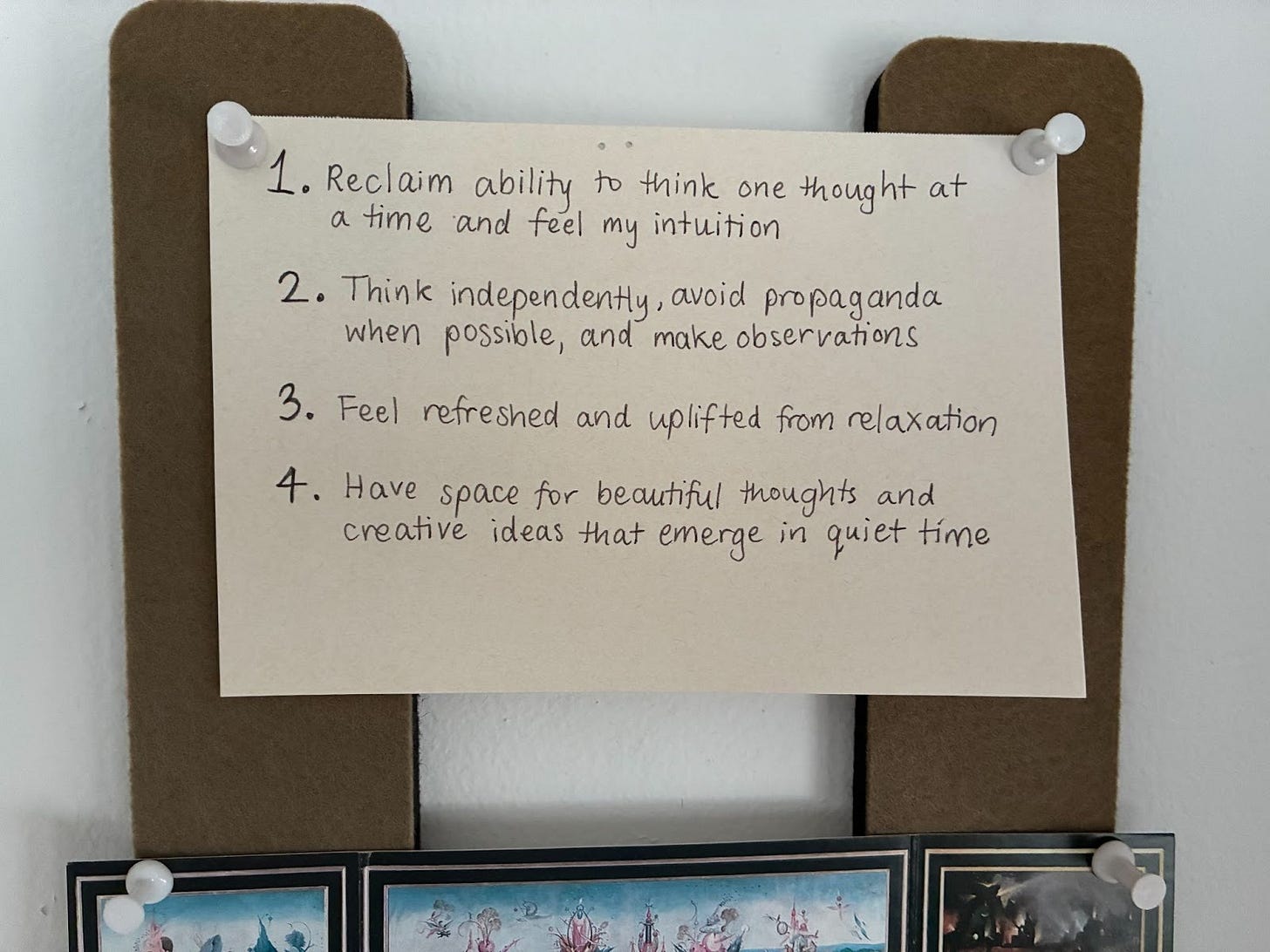

I believe in resisting algorithmic manipulation. I have experimented with using a flip phone, deleting social media accounts, and a variety of attention-saving apps and techniques. Ultimately, the most successful method has been clarifying my motivation to myself. I posted these reasons above my desk:

I would love to further explore how people can establish a more satisfying relationship with the internet.

Solutions

Regarding the way to prevent a parasitic symbiosis/“symtechnosis”, the answer is to resist manipulation, connect with other humans, and not avoid unpleasant challenges. We need to intentionally prioritize independent thinking and human connection.

One strategy I have used that has not been particularly successful is taking people to waterfalls. I live on an island with abundant magical waterfalls, and I find that I am deeply affected by their beauty in a way that enhances my conviction about the importance of nature, humanity, and truth.

This strategy has mixed results – some people are similarly affected, and others appreciate the experience without being deeply moved.

There are plenty of other challenges that we face besides avoiding parasitic symtechnosis. More useful insights may be found in our existing understanding of nature.

I started this off with the suggestion that AI researchers and different types of biologists should discuss their ideas more intensively. The concept of symbiotic relationships is one big idea that AI forecasters could consider from mycology. I think there are many others.